IBM pioneers the enterprise AI field with watsonx

- During IBM Think Singapore, the tech giant talked about how it’s charting the path in enterprise AI.

- In particular, it drew attention to its AI solutions portfolios.

- watsonx is aimed at meeting five core enterprise needs.

For decades, IBM has been at the forefront of breakthroughs in AI, boasting one of the most comprehensive portfolios of enterprise AI solutions available. In fact, since 2010, the tech giant has delivered market-leading AI capabilities with its Watson products, deployed by over 100 million users across 20 industries.

Then generative AI came. The revolutionary technology, like any new product, had some teething troubles over quality and trustworthiness. But based on decades of research, past trust-assuring practices, and mainframe standards, IBM came up with watsonx — a new generative AI platform that will help enterprises design and tune large language models (LLMs) for their operational and business requirements.

watsonx is essentially a reboot of IBM’s Watson AI suite, introduced in 2010. “With the development of foundation models, AI for business is more powerful than ever,” said Arvind Krishna, IBM chairman and CEO when launching watsonx a few months ago.

With the watsonx platform, the company said it is trying to meet enterprises’ requirements in five areas: interacting and conversing with customers and employees, automating business workflows and internal processes, automating IT processes, protecting against threats, and tackling sustainability goals.

At its annual Think conference in Singapore last week, IBM walked the reporters through watsonx. This article explores the main takeaways and my insights gained from the event.

watsonx: AI for business Explained

Unlike OpenAI, Microsoft, Google, and others, IBM never got itself into the consumer-facing AI frenzy driven by ChatGPT. IBM’s prowess caters to enterprises in general, including governments. “It’s designed for enterprise and targeted for business domains to solve real business problems that drive quick gains in productivity,” Sriram Raghavan, VP at IBM Research AI, told reporters during a panel session at IBM Think Singapore.

Sriram Raghavan, VP, IBM Research AI.

Raghavan meant that watsonx empowers organizations to be AI value creators, not mere users. With watsonx, enterprises are not limited to just prompting someone else’s AI model with no control over the model or the date.

watsonx allows users to train, fine-tune, deploy, and govern the data and AI models they bring to the platform. They own completely the value they create. As a cloud-native AI and data platform, watsonx consists of watsonx.ai, watsonx.data, and watsonx.governance.

- watsonx.ai: is a studio for foundation models, generative AI, and machine learning.

- watsonx.data: is a data store built on an open lakehouse architecture.

- watsonx.governance: is a toolkit for responsible AI workflows.

In short, watsonx.ai allows AI developers to harness models offered by IBM and the Hugging Face community to tackle a broad spectrum of AI development tasks. These models come pre-trained and geared to handle various NLP tasks, encompassing question answering, content generation, summarization, text classification, and data extraction.

New foundation model day for watsonx.

Future releases will expand the array of IBM-trained proprietary foundation models, facilitating efficient specialization in specific domains and tasks. Meanwhile, IBM’s watsonx.data assists clients in overcoming challenges related to data volume, complexity, cost, and governance as they scale their AI workloads.

The platform lets users seamlessly access their data, whether stored in the cloud or on-premises, through a single entry point. This approach dramatically simplifies data access for non-technical users while ensuring security and compliance. The significance of watsonx.data is that it empowers those non-technical users by granting them self-service access to enterprise-grade trustworthy data within a unified collaborative platform.

It also reinforces security and compliance protocols through centralized governance and local automated policy enforcement. Soon, watsonx.data will harness the capabilities of watsonx.ai foundation models to simplify and expedite user interactions with data.

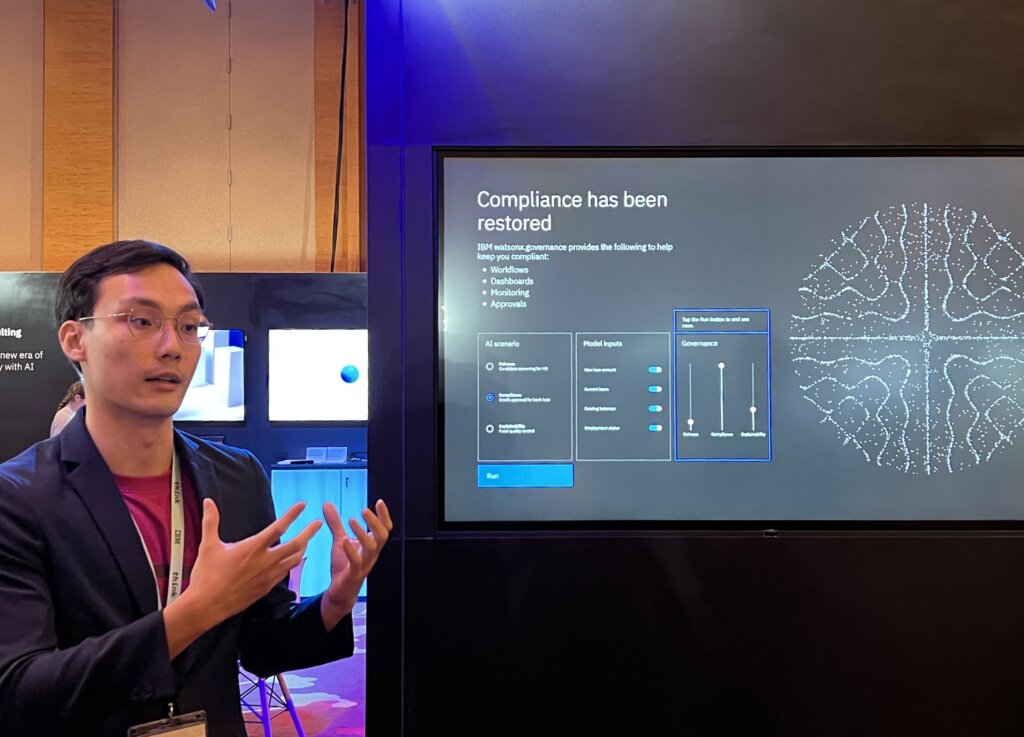

IBM demonstrating ways enterprises can put governance to work using watson.governance

Then there’s watsonx.governance, leveraging IBM’s robust AI governance capabilities to assist organizations in implementing end-to-end lifecycle governance, mitigating risks, and effectively managing compliance with the evolving landscape of AI and industry regulations. The advantage is that watsonx.governance empowers organizations to lead and oversee their company’s AI initiatives.

IBM’s AI roadmap

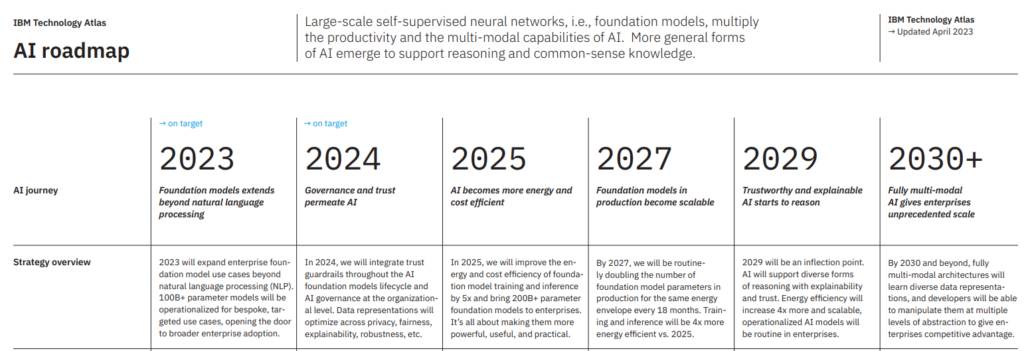

When IBM launched watsonx, it also unveiled its AI roadmap for the next decade, from 2023 to 2030 and beyond. While the goal this year is to expand the enterprise foundation model use cases beyond NLP, IBM foresees 2024 to be the year Big Blue will integrate guardrails throughout the AI foundation model lifecycle and AI governance at the organizational level.

IBM AI Roadmap. Source: IBM

In fact, IBM, according to CEO and chairman Arvind Krishna, believes smart regulation should be based on three core tenets:

- Regulate AI risk, not AI algorithms.

- Make AI creators and deployers accountable, not immune to liability.

- Support open AI innovation, not an AI licensing regime.

“If AI is not deployed responsibly, it could have real-world consequences – especially in sensitive, safety-critical areas. This is a serious challenge we must overcome, and it is precisely why we urge Congress and the Administration to enact smart regulation now,” Krishna said in a LinkedIn post dated September 14.

By 2025, IBM believes AI will become more energy and cost-efficient. “In 2025, we will improve the energy and cost efficiency of foundation model training and inference by five times and bring 200 billion over parameter foundation models to enterprises,” the roadmap reads.

In 2027, IBM expects foundation models in production to become scalable. By that year, it will be routinely doubling the number of foundation model parameters in output for the same energy envelope every 18 months. IBM says training and inference will be four times more energy efficient by then than it will be in 2025.

2029 will be an inflection point, according to Big Blue. “AI will support diverse forms of reasoning with explainability and trust. Energy efficiency will increase four times, and operationalized AI models are predicted to be a routine in enterprises.