The robots are coming. But don’t panic, it’s mainly the software robots and we’re (mostly) in control of what they’re doing.

Hardware robots are kind of coming too, but they’re still mainly located in heavyweight industrial engineering deployments like factories (as they have been at their most basic level for some years )… and when they surface in our homes they’re mainly still those circular floor vacuum robots and the odd electronic toaster.

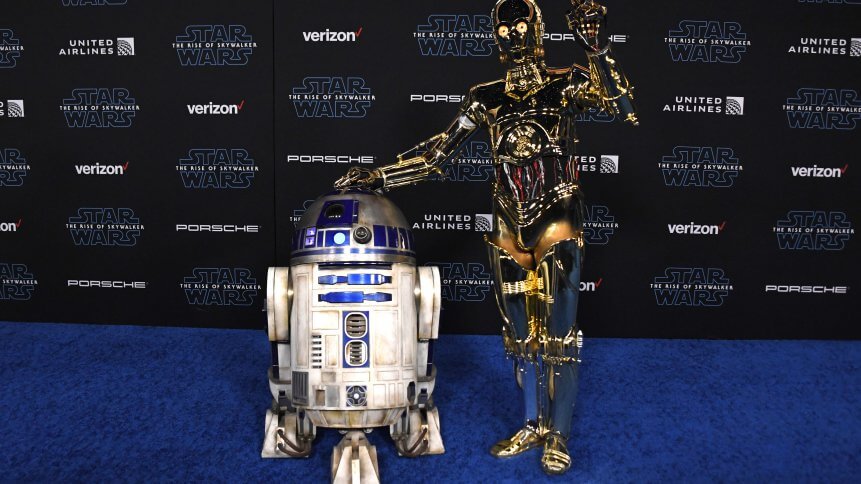

As we stand on the cusp of 2020, a web search for ‘house robots’ is more likely to turn up the home team killer robots on BBC television’s Robot Wars show than it is to offer you a personal C-3PO.

Software robot ‘bots’

The robots we’re talking about are software robots.

Also variously known as Robotic Process Automation (RPA) tools, or just simply by the shortening ‘bots’, these robots have no real physical form, other than the Graphical User Interface (GUI) that they interact with and send user information to. They are simply pieces of code designed to execute tasks for us.

Given that we now accept the likelihood of an increasing amount of Artificial Intelligence (AI) being brought to bear in both enterprise and consumer software, what’s next for our robotic future as we turn the decade?

Time to operationalize!

We can build all the software robotics we want, but if we can’t apply it to real-world working environments then these innovations risk being left on the (virtual) shelf in experimental concept labs. To borrow a phrase from Geoffrey Moore, robots need to cross the chasm if they are going to start working for us in productive everyday roles.

It is because of this chasm crossing reality that we talk about the need to operationalize bots. This is the leap we need to take… and that means putting the bots through boot camp training for real-world interaction and integration with us humans.

We can’t just let the bots loose on all of our work processes, we need higher-level software management platform intelligence to be able to reliably deploy, operationalize and monitor bots. Only once we start comparatively small and do this at a more granular level can we hope to scale software robots all the way up to mass user adoption levels.

Bot tinkering

But what does it take to tune up and tinker with a bot? How do we troubleshoot implementation challenges and optimize workflows that now feature software bot intelligence? Do we need to worry about the quality of service we’re getting from our bots… and where do we put the oil into these things in the first place?

A growing stream of vendors is evolving in this space. Not quite calling themselves bot engineers, they do specialize in bot reliability through the use of techniques including lower-level software ‘event’ logging.

Other techniques include the use of cross-bot telemetry monitoring to attempt to provide insight into how bots interact and impact each other. It’s also important to include data ingestion from the software robots themselves; this helps analyze patterns and workflows to uncover the source of failures and detect anomalies earlier.

“Bots can automate thousands of manual repetitive tasks such as transferring information between departments or invoice processing, but only if companies have the right tools and processes in place to use them effectively,” said Ram Sathia, vice president of automation at ProKarma.

Sathia recommends bot implementation only in environments where enterprises can observe, monitor, trace and be alerted to bot failures to help maintain the efficient operationalization of bots and smooth ongoing operations.

“A new, connected way of working is coming where humans, bots, and objects interact. This means that platforms and tools have to evolve to handle complex interactions between humans and bots in order to drive successful business outcomes,” added Sathia.

Putting bots to work

Where all this leads us to is the need for a human-to-bot touchpoint. We’ve mentioned GUI readouts already, but we need a way for bot performance, health and status to be conveyed to non-technical workers as well. After all, along with bot operationalization, we also need bot democratization so that everyone can have their own (software) robot.

Logically enough then, we will turn to visualization tools (graphs, heat maps and so on) to offer a more accessible overview of bot performance that is customizable for business leaders and individual business units. This, for now at least, completes the circle of bot empowerment that we will need going into the decade ahead.

Where we will be in this discussion in 10-years time is almost impossible to predict. We can only imagine that software robotics will continue to develop a greater understanding of human sentiment and the nuances of language as it learns more about how we need to live our lives.

The robots are still mostly virtualized and abstracted software-based things — you can’t stroke them or put oil in them yet, but there are still ways to look after them.

There, there, nice robot, happy new year.