IT management tools must reflect our ‘distributed reality’

Your desktop computer or laptop is not alone. Okay, it’s true, many of the actions performed by a user in their day-to-day interaction with their computing devices are performed in solitude, within the confines of one application, on one keyboard, running on the single processor that the machine ships with.

But the singularity of ‘lone computing’ (for want of a better term) has become increasingly rare in the web-connected age of interconnected cloud services.

Computing today is heavily networked, often collaboratively connected and therefore essentially distributed in its nature.

Many models, common goal

While there are a variety of models to describe the form of different distributed computing structures, they all share an ability for one computer to connect to another for some collectively agreed end goal to be completed, or at least worked towards.

In a world of interconnected devices, servers, databases and infrastructure layers, the need to be able track computing ‘events’ at a lower substrate level of machine data is essential for monitoring system health, wealth and indeed stealth.

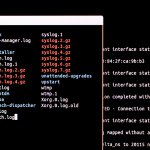

Because every event in every machine creates a log file to denote the nature of its action, the practice of log file management and log analytics has thrown up a whole tier of the IT industry devoted to managing and analyzing the world of machine data.

Often synonymous with Security Information Event Management (SIEM), log analytics specialists include Datadog, Logrhythm, Elasticsearch, Sumo Logic, Microfocus, IBM and the curiously named Splunk.

Splunk gets its name from spelunkers who engage in Spelunking, the American term for caving or potholing. The parallel is drawn because Spelunkers wade through tortuous cavernous underground subsystems – and Splunkers navigate their way through cavernous amounts of noisy machine data.

Real-time observability

With so much machine data flying around (let’s not forget, users’ data is a drop in the ocean compared to the swathes of machine-to-machine data created in the Internet of Things), it should come as no surprise that log analytics needs to deliver real-time observability.

As something of a direct answer to this challenge, Splunk has this month enhanced its portfolio for IT Operations that focuses on real-time observability for cloud infrastructures and microservices.

This, if you will, is the intersection point between IT Operations Management (ITOM) and (AIOps), or perhaps ITOMAIOps (pronounced: Aye-Tom-Ayups).

The company says that the addition of the recently acquired SignalFX allows Splunk to provide an observability portfolio for organizations at every stage of their cloud journey, from homegrown on-premises applications to cloud-native applications.

YOU MIGHT LIKE

Why this is the age of continuous intelligence

Streaming analytics

Splunk acquired SignalFX in October 2019, the company is an Application Performance Management (APM) software vendor with specialist skills in real-time monitoring and metrics for cloud infrastructure, microservices, and applications. Among the SignalFX goodies that Splunk gets are technologies aligned for streaming analytics, such as the NoSample architecture for distributed tracing – a technology method used to profile analyze and monitor applications that rely upon distributed microservices architectures.

Rick Fitz, Senior Vice President and General Manager of IT Markets at Splunk says that the firm is dedicated to helping manage what he calls “emerging complexities presented by digital transformation”… and in this regard he wants to highlight his companies use of Artificial Intelligence (AI) and Machine Learning (ML) to aid IT operations.

“As organizations evolve, they move farther away from a ‘manufacturing model’ of specialization [and so focus on smaller component parts of business] … then silos will be broken down, particularly between DevOps and IT Ops. This means that teams will have to get better at reacting to speed and complexity,” said Fitz.

This new software is said to give everyone from systems administrators to the CIO the same capability to monitor, investigate and act in order to work together. Organizations that are in the cloud, on-premises or hybrid can use Splunk ITSI to get a unified view across organizational silos and predict and prevent problems.

Our distributed reality

The last decade or so of information technology developments have been typified by the drive to break apart IT into smaller parts that are more composable to serve the custom-built use case that every organization needs.

There is, of course, no greater example than blockchain, which gets its inherent immutability from the fact that it is essentially distributed. Any change made in one place needs to be reflected on all other machines that hold that blockchain.

Suddenly the need for real-time observability and system log metrics starts to make a lot more sense i.e. we need to know what’s going on at the surface level with users and equipment, but we also need to know that we can rely upon problem detection to root cause by using metrics, traces and logs without context switching for any given application.

Yes, log analytics is deep geek level, down in the weeds and under the hood. But every airport you use runs log file analysis, every cloud service you use (from Facebook to Google Docs and so on) runs log file analysis and the fact that you’ve read this story has generated log file reports on your machine and across the network of the web itself.

Captain Kirk has a log… and now you have your own set too.