Why this is the age of continuous intelligence

Back in the last millennium, before the always-on web and before the smartphone, using a computer involved tasks that today seem unfathomable. Loading a computer program involved the insertion of multiple 3.5-inch floppy disks into a machine, which could take most of an hour, especially if you got things wrong.

Connecting to another computer (and to early iterations of the web itself) involved the use of a dial-up modem, which was often laborious, troublesome and flaky. Even when we got to CD-ROM installations, load times we far from instantaneous and the disks themselves weren’t always a failsafe way of encapsulating and thus providing the software.

Still, we put up with all of that, even 20-years ago… probably because many of us remembered much bigger, slower floppy disks and the insane world of software on cassette tape in the decades that preceded.

But that was then and this is now, and we’re all happily dialed into a ubiquitous web that delivers always-on software onto mobile devices in our pocket, that we very often don’t even need to install i.e. we can simply visit a browser-based version of the app we want that benefits from being continuously deployed and continuously updated at the back end.

As a result, we can now say that the age of continuous computing has finally dawned.

The continuous continuum

Today, this use of the term continuous is spread so thick throughout our contemporary IT verbiage that we now talk about CI/CD in computing circles without bothering to explain that this is the initialism denoting Continuous Integration and Continuous Deployment. We, the users, have come to expect this level of continuousness from the apps we consume… and we also want continuous security, slathered generously on top.

But continuous computing at the front-end user level is not possible without an equal and opposite level of always-on monitoring and management at the back end. The industry likes to call this Continuous Intelligence and it is one of the newer and fastest-growing areas of technology.

We’ve already used up on CI on Continuous Integration, so we’ll have to try and call Continuous Intelligence (Cont-Intel) and see if that sticks.

Entering the logging business

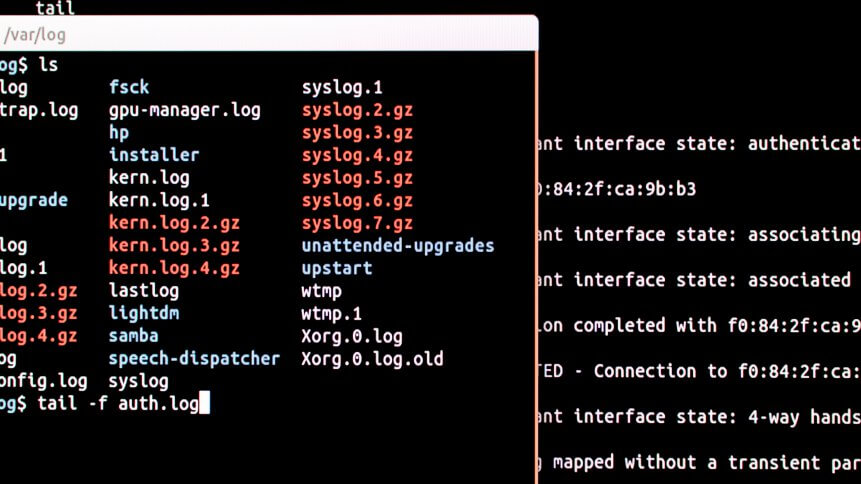

Continuous Intelligence platforms are now looking to provide IT managers with dashboard-based visualizations that can provide insights into which applications are putting which workloads on which cloud services. They get this insight by tracking the log files that all IT systems generate.

All applications, database services, analytics engines, and other IT systems generate a base level ‘log’ file whenever they execute, connect or coalesce to perform any single (or indeed multiple) compute action, so being able to track logs as a means of monitoring the needs of the continuous computing cognoscenti is a logical and useful route to take for IT management and security.

“Today, unstructured data created by digital services such as IoT, mobile apps, websites, and SaaS services is the primary source of signal for businesses. Without a way to consolidate these signals into a single, real-time view, companies remain stuck in an intelligence gap,” said Christian Beedgen, Co-Founder and CTO, Sumo Logic.

YOU MIGHT LIKE

The software wizard legacy lives on

Beedgen’s firm provides cloud-native Continuous Intelligence to build, run and secure modern applications and cloud infrastructures. Where it becomes even more interesting is when we can access the kinds of data patterns being experienced by other companies and map them to our own IT architecture in order to build a more efficient software stack.

Sumo Logic itself provides what it calls Global Intelligence, a means providing insight into real-time data from its community of over 100,000 users. The data is anonymized and appropriately obfuscated so that customers can get peer-to-peer global benchmarks, alongside an ability to use machine data analytics in data science modeling.

Where Continuous Intelligence is supposed to get us to (if intelligently architected and deployed and managed) is a point where customers can move cloud workloads around depending on where they can be most cost-effectively and efficiently handled at any point in time.

“Until now, adopting cloud meant choosing a vendor, being locked in, having only one choice and facing an uphill battle integrating existing on-prem services. We launched Google Anthos to serve customers who want the option of running their workloads in the environments best suited to their needs,” said Jennifer Lin, Director of Product Management for Anthos at Google Cloud.

Continuous operational visibility

Customers using this kind of technology have called it a means of gaining continuous operational visibility into live running systems. Others have pointed to the use of cloud orchestration systems such as Kubernetes and said that use of Continuous Intelligence services allows them to correlate data sources to understand the full impact of vulnerable software artifacts as they exist across all operational environments— including Kubernetes.

What this leads us to is a new way of doing business in a new connected way where server-side cloud platform technologies are far more aware of user behavior… and, ultimately, therefore, more directly accountable for any customer’s market success. So it’s a connection point between economics and IT intelligence.

“In what I like to call the ‘intelligence economy’, digital business is producing a tsunami of data that leaders and employees are under constant pressure to understand and act upon to great customer experiences. Without real-time insights that are actionable, these businesses risk falling into the intelligence gap, making them more vulnerable to disruption and irrelevance,” said Ramin Sayar, President and CEO for Sumo Logic.

The bottom line here is a new law of economics, or a new law of cloud economics if you wish.

The use of Continuous Intelligence in operation must mirror the operational and economic model of cloud computing. Like cloud, this an economics that must be always-on, fully backed up, truly flexible and interoperable in order to function.

If we can build this kind of mirror reflection from front end to back end, then we can work in the always-on world of continuous computing effectively.