ChatGPT accounts compromised, sold on the dark web

• ChatGPT accounts found for sale on the dark web.

• Information stealer malware like Raccoon responsible.

• Greater cyber-hygiene urged to slow down credential theft.

Over 101,000 ChatGPT accounts have been sold on the dark web.

That’s the warning from cyberintelligence company Group-IB, operating out of Singapore.

The company noticed the trend towards ChatGPT account vulnerability while analyzing data about the information-stealer class of malware. It noted over a hundred thousand info-stealer logs across the dark web, correlating to ChatGPT account credentials.

In May 2023 alone, bad actors across the dark web posted 26,800 pairs of ChatGPT credentials.

Naturally, OpenAI is keen to point out that the ChatGPT credentials have ended up on the dark web as a result of this class of malware on machines around the world, rather than due to any central data breach at the firm. But in some senses, the truth of this makes the situation much more difficult to handle.

At least in the event of a single central data breach, clean-up events could be handled from a central point and in a coherent, directed way.

Information stealer malware is more complex to clean up after because first of all, it’s highly distributed around the world – there’s no single attack to deal with, just over 100,000 individual cases of information breach, on different machines.

Why are ChatGPT accounts on the dark web?

ChatGPT was of course the first of the new generative AI platforms to launch in late 2022, meaning that – perverse as this may sound for so new a technology – it’s the legacy candidate in the marketplace. That means it’s had the longest time to attract users, and it’s still regarded as the yardstick generative AI, with enterprises, SMBs, and individuals signing up for accounts with it at the very first opportunity.

Some businesses are embedding ChatGPT into their fundamental operating principles, on the grounds that it gives them a significant business advantage over companies who hesitate, or wait until all the data security and regulation issues have been sorted out.

So what does it mean that so many ChatGPT accounts have been compromised and sold on the dark web?

In the first instance of course, it means that bad actors could potentially be using the ChatGPT accounts of legitimate businesses or individual users for nefarious purposes.

In the second instance, it means the most likely way those legitimate users will be able to find out if their ChatGPT accounts have been sold on the dark web will be if and when they’re reported, either as bad actors, or as performing unusual activities, or having uncharacteristic amounts of ChatGPT activity. Given that the generative AI has already been embedded in the working principles and practices of so many businesses, that might not show up as anything significant enough to raise a red flag.

How are ChatGPT accounts on the dark web?

Information stealer malware tends to take credentials that are stored in web browsers, literally stealing them from SQLite databases and using the CryptProtectData function to reverse the encryption of the stored secrets.

Devious, but appallingly straightforward. So how do these ChatGPT account credentials end up on the dark web?

Usually, once they’re stolen, they’re packaged with other purloined data into the kind of logs noticed by Group-IB. The logs are sent back to the malware operators’ systems. And from there, ChatGPT account details can be sold – along with any other juicy data like credit card data, cryptocurrency wallet credentials – onto the dark web.

Where that’s particularly dangerous in the case of ChatGPT accounts is twofold. Firstly, because of the conversational and data-dependent nature of ChatGPT, having access to accounts on the dark web could leave peoples – and companies’ – proprietary data and code exposed, either for active use or follow-up ransomware.

A story that’s true, even though Fox News has reported it.

And secondly, there’s that fact that ChatGPT is increasingly everywhere, in both the civilian and the business world. That means that not only companies’ ChatGPT accounts might have been sold on the dark web, but multiple user accounts within the same company might have been compromised. And every day the information stealer malware goes unremedied, that danger multiplies.

All of which begs the question of how individuals and companies can know whether their ChatGPT accounts have already been sold on the dark web, and if they have, what they can do about it.

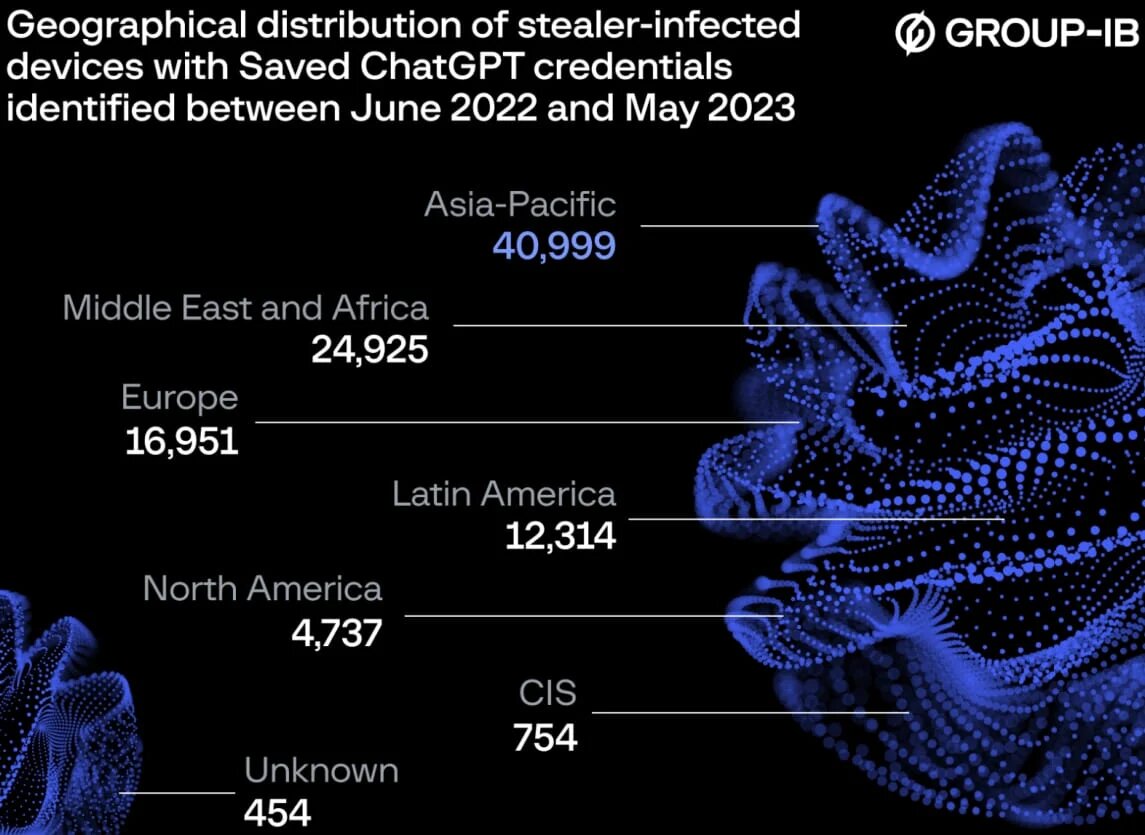

The depressingly honest answer is that investigations are ongoing into whose ChatGPT credentials are available for sale on the dark web. Group-IB has released a geographical breakdown of where most of the ChatGPT credentials are from, and the largest hit has been in the APAC region, where over 40,000 accounts are compromised. Over 16,000 European accounts have turned up on the dark web, while in North America, the figure is just short of 5,000.

This is an issue significantly more rife in the APAC region than anywhere else in the world.

Like most data that’s dumped on the dark web, though, mitigation seems to be beyond organizations right now – like all data sold on the dark web, ChatGPT accounts that are compromised are like genies let out of their bottles to do the bidding of the highest bidder. Changing credentials may be effective, inasmuch as it renders the sold credentials out-of-date.

But simply changing credentials is no use if information stealer malware is in your system, and you continue to record your password in a browser.

Be careful with your password management.

You’ll need to invest in more rigorous cybersecurity measures to mitigate against information stealer malware, and to ensure staff throughout your company practice higher levels of cyber-hygiene, to ensure that the theft of your ChatGPT credentials can’t be repeated.