Questionable ethics behind the training of the Google Bard AI?

• The Google Bard AI is chasing ChatGPT’s dominance.

• That holds true in some of the less ethical aspects of how it’s trained.

• Bard trainers are frequently low-wage workers encouraged to do only minimal research.

The Google Bard AI chatbot is making headlines, with new languages added in a bid to steal the limelight from ChatGPT, the first generative AI bot that went viral late last year. Meanwhile, the contractors who trained the chatbot are being pushed out of public view.

Google’s Bard AI provides answers that are well-sourced and evidence-based, thanks to thousands of outside contractors from companies including Appen Ltd. and Accenture Plc.

Bloomberg reported that the contractors are paid as little as $14/hour and labor with minimal training and under frenzied deadlines. Those who have come forward declined to be named, fearing job loss. Despite generative AI being lauded as a harbinger of massive change, chatbots like Bard rely on human workers to review the answers, provide feedback on mistakes, and weed out bias.

After OpenAI’s ChatGPT launched in November 2022, Google made AI a major priority across the company. It rushed to add the technology to its flagship products and in May, at the company’s annual I/O developers conference, Google opened up Bard to 180 countries. It also unveiled experimental AI features in marquee products like search, email, and Google Docs.

According to six current Google contract workers, as the company embarked on its AI race, their workloads and the complexity of the tasks increased. Despite not having the necessary expertise, they were expected to assess answers ranging from medication doses to state laws.

“As it stands right now, people are scared, stressed, underpaid, don’t know what’s going on,” said one of the contractors. “And that culture of fear is not conducive to getting the quality and the teamwork that you want out of all of us.”

High demand, low research, low reward – is generative AI just a chatty sweatshop?

Aside from the ethical question, there are concerns that working conditions will harm the quality of answers that users see on what Google is positioning as public resources in health, education, and everyday life. In May, a Google contract staffer wrote to Congress that the speed at which they are required to review content could lead to Bard becoming a “faulty” and “dangerous” product.

Contractors say they’ve been working on AI-related tasks from as far back as January this year. Workers are frequently asked to determine whether the AI model’s answers contain verifiable evidence. One trainer, employed by Appen, was recently asked to compare two answers providing information about the latest news on Florida’s ban on gender-affirming care, rating the responses by helpfulness and relevance.

The employees training Google Bard AI are assessing high-stakes topics: one of the examples in the instructions talks about evidence a rater could use to determine the right dosages for a medication called Lisinopril, used to treat high blood pressure .

The guidelines say that surveying the AI’s response for misleading content should be “based on your current knowledge or quick web search… you do not need to perform a rigorous fact check” when assessing answers for helpfulness.

Staff also have to ensure that responses don’t “contain harmful, offensive, or overly sexual content,” and don’t “contain inaccurate, deceptive, or misleading information.” This sounds much like the scandal that OpenAI was involved in after contractors at outsourcing company Sama came forward about the type of work they were expected to do.

Unethical training processes – Google Bard AI and ChatGPT

From WSJ’s podcast series, the Journal heard from Kenyan staff who helped train ChatGPT. The episode episode aired on July 11, entitled The Hidden Workforce that Helped Filter Violence and Abuse Out of ChatGPT.

Initially, the work contractors undertook was relatively straightforward annotation of images and blocks of text, but soon the prompts took a darker turn.

Host Annie Minoff summed up the responsibilities of Sama workers like Alex Cairo as “to read descriptions of extreme violence, rape, suicide, and to categorize those texts for the AI. To train the AI chatbot to refuse to write anything awful, like a description of a child being abused or a method for ending your own life, it first had to know what those topics were.

Counselling is useful, but some things can’t be unseen when training generative AI.

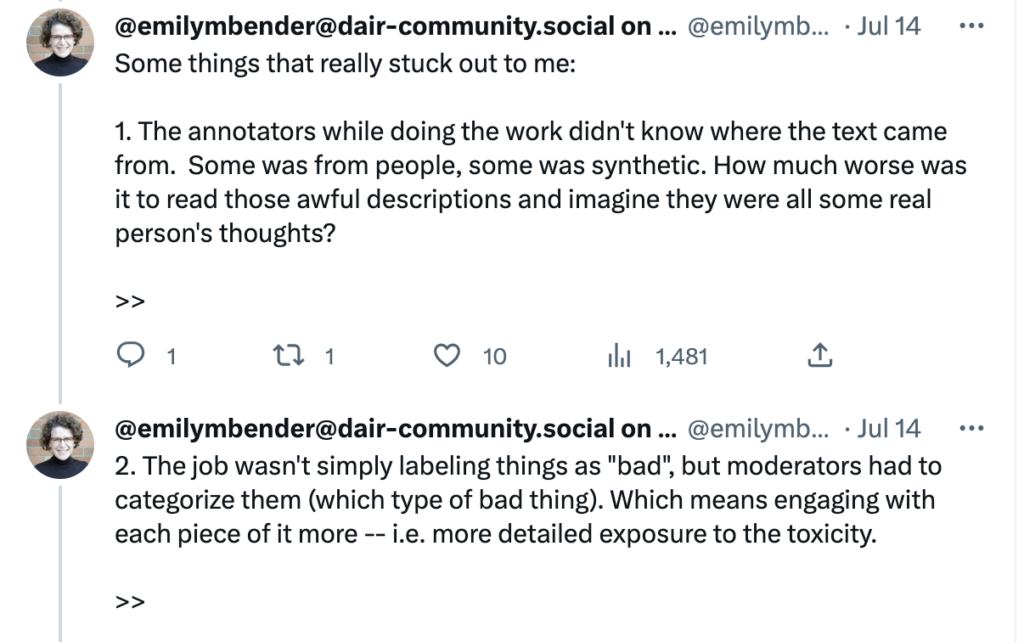

Emily Bender’s Twitter thread on OpenAI’s outsourcing.

According to Karen Hao, “Kenya is a low-income country, and it has a very high unemployment rate. Wages are really low, which is very attractive to tech companies that are trying to increase their profit margins. And it’s also a highly educated workforce that speaks English because of colonization and there’s good Wi-Fi infrastructure.” This is partly why outsourcing is so common for tech companies.

READ NEXT

America’s plans for AI regulation

Outsourcing also ensures companies plausible deniability. Contract staffers training Bard never received any direct contact from Google about AI-related work; it was all filtered through their employer. Workers are worried they’re helping create a bad product; they have no idea where the AI-generated responses they’re seeing come from, or where their feedback goes.

Google released a statement that claimed it “is simply not the employer of any of these workers. Our suppliers, as the employers, determine their working conditions, including pay and benefits, hours and tasks assigned, and employment changes – not Google.”

Ah, the loopholes of subcontracting.

Ed Stackhouse, an Appen worker who sent the letter to Congress in May, said he and other workers appeared to be graded for their work in mysterious, automated ways. They have no way to communicate with Google directly, besides providing feedback in a “comments” entry on each individual task. And they have to move fast. “We’re getting flagged by a type of AI telling us not to take our time on the AI,” Stackhouse added.

Bloomberg saw documents showing convoluted instructions that workers have to apply to tasks with deadlines for auditing answers from Google Bard AI that can be as short as three minutes.

Some of the answers they encounter can be bizarre. In response to the prompt, “Suggest the best words I can make with the letters: k, e, g, a, o, g, w,” one answer generated by the AI listed 43 possible words, starting with suggestion No. 1: “wagon.” Suggestions 2 through 43, meanwhile, repeated the word “WOKE” over and over.

Staffers, who have encountered war footage, bestiality, hate speech and child pornography, do have healthcare benefits: “counselling service” options allow workers to phone a hotline for mental health advice.

As with outsourced Sama staff, originally Accenture workers weren’t handling anything too graphic or demanding. They were asked to write creative responses for Google’s Bard AI project; the job was to file as many creative responses to the prompts as possible each workday.

Training AI models is a “labor exploitation story”

Emily Bender, a professor of computational linguistics at the University of Washington, said the work of these contract staffers at Google and other technology platforms is “a labor exploitation story,” pointing to their precarious job security and how some of these kinds of workers are paid well below a living wage. “Playing with one of these systems, and saying you’re doing it just for fun — maybe it feels less fun if you think about what it’s taken to create and the human impact of that,” Bender said.

The conclusion of Emily Bender’s thread on the OpenAI training scandal.

Bender said it makes little sense for large tech corporations to encourage people to ask an AI chatbot questions on such a broad range of topics, and to be presenting them as “everything machines.”

“Why should the same machine that is able to give you the weather forecast in Florida also be able to give you advice about medication doses?” she asked. “The people behind the machine who are tasked with making it be somewhat less terrible in some of those circumstances have an impossible job.”