Can understanding real-time accents help perfect conversational AI?

While conversational AI has worked wonders for many businesses, there are still many pain points in using the technology that can frustrate customers. Most conversational AI solutions are trained to understand English from a native speaker. For other languages like Mandarin, French and so on, the AI might bealso trained to pick up the native pronunciations.

The global conversational AI market is expected to reach US$ 12.1 billion by 2025. The growth is caused mostly by organizations implementing more technologies in the customer service industry, especially with remote working disrupting the workflow of these customer-facing roles.

At the same time, there is a myriad of conversational AI solutions in the market today. Be it from large tech vendors to startups, each of these solutions is design to enhance the customer service industry, especially in dealing with customer queries and providing a seamless experience.

Unfortunately, most of these solutions are still unable to understand the broad range of accents that might speak a particular language today. Speech-to-text translation tools also often misunderstand words spoken, especially by non-native speakers or those with an accent.

So what happens when a person speaks with an accent? In today’s globalized society, the spoken language has moved away from its native sound. Slangs and new catchphrases used in conversations have become accepted. However, most conversational AI struggle to deal with this modern problem.

This accent mismatch situation can become a major inefficiency in business and risks serious misunderstandings. To foster seamless communication in all areas of business, education, telemedicine, entertainment and more, conversational AI solution Sanas AI will officially roll out the world’s first real-time speech accent translation technology. The solution will be used by seven Business Process Outsourcers globally, starting in the fall of this year.

Converting accents in real-time

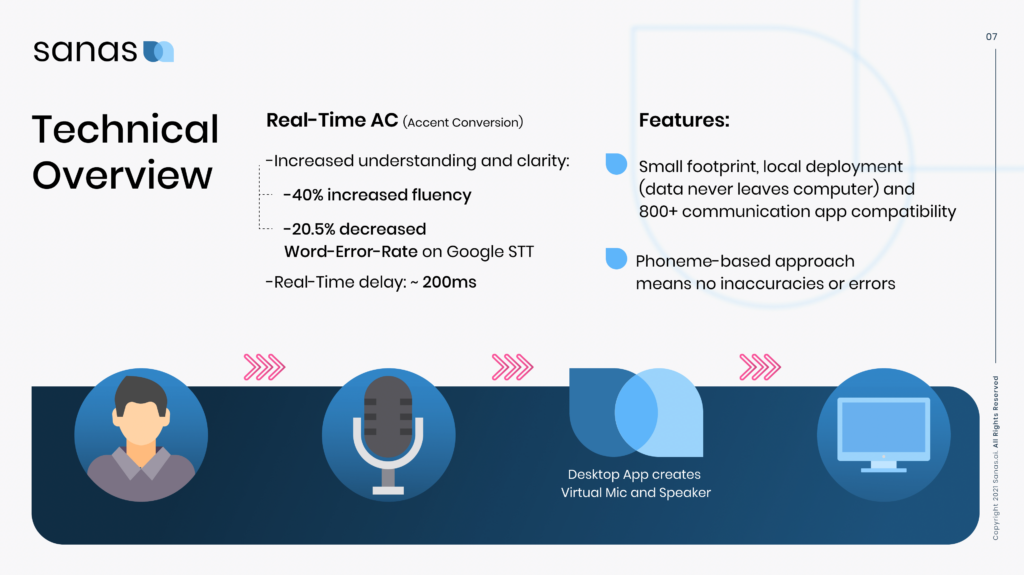

What makes Sanas unique is that the software can intercept audio and converts accents through a speech-to-speech approach without any lag and edge deployment. Sanas builds a virtual bridge between the audio device and the computer, and then sends the new signal to whichever communication app (Zoom, Hangouts, etc.) is in use. Almost instantly, the accent of a customer care representative, for example, will be matched to the accent of an incoming caller.

Source: Sanas

Created by a team of Stanford student engineers and top speech machine learning experts, the first application for Sanas is in customer care centers, an industry where accent issues can be particularly problematic.

“The world has shrunk, and people are doing business globally, while at the same time they have real difficulty understanding each other. Even getting Google Home or Alexa to understand accents accurately is extremely important. Digital communication is critical for our daily lives. Sanas is striving to make communication easy and free from friction, so people can speak confidently and understand each other, wherever they are and whoever they are trying to communicate with,” said Sanas CEO Maxim Serebryakov.

Having experienced first-hand the communication struggle due to accents, the founders of Sanas plan to introduce accent-matching technology to a range of industries and environments far beyond customer care and technical support. Serebryakov added that there are also creative use cases such as those in entertainment and media where producers can make their films and programming understandable in different parts of the world by matching accents to localities. They are also exploring how machines can better interpret what people are saying.

The use of Sanas to translate accents in real-time may just be the game-changer that the conversational AI industry has been waiting for. With more players coming into the market and promising significant improvements to the technology, conversational AI could very well be headed for higher adoption among businesses.