Facial recognition to monitor staff should be banned, says Council of Europe

The use of facial recognition technology in the workplace to judge employees’ engagement, emotions and performance should be outlawed, according to the Council of Europe.

The 47-state human rights organization has called for “strict rules” to avoid significant risks to privacy, while certain applications of facial recognition should be banned altogether to avoid discrimination.

In a new set of guidelines addressed to governments, legislators, and businesses, the Council proposes that the use of facial recognition for the sole purpose of determining a person’s skin color, religious or other belief, sex, racial or ethnic origin, age, health or social status should be prohibited.

But it also decries the emerging use of “affect recognition” technologies, which can identify emotions, and be used to detect personality traits, inner feelings, mental health condition, or workers’ level of engagement.

These applications should be banned, it said, since they pose important risks in fields such as employment, access to insurance, and education.

“At its best, facial recognition can be convenient, helping us to navigate obstacles in our everyday lives,” said Council of Europe Secretary General, Marija Pejčinović Burić.

“At its worst, it threatens our essential human rights, including privacy, equal treatment, and non-discrimination, empowering state authorities and others to monitor and control important aspects of our lives – often without our knowledge or consent.”

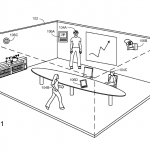

The use of facial recognition for employee monitoring is a particularly controversial area. Microsoft recently filed a patent for a system to monitor employees’ body language and facial expressions during work meetings, in order to assign events a ‘quality score’. The filing suggested it could be used in both real-world meetings, in rooms filled with sensors, or in virtual catchups.

It also suggested that employees’ smartphones could be used to monitor whether they were engaged in other tasks such as texting or browsing the internet.

‘People analytics’ has long been a controversial concept — e-commerce giant Amazon is perhaps the most infamous user, using software to monitor the length of breaks taken or products picked by warehouse employees, for example.

YOU MIGHT LIKE

Is this the (worrying) future of hybrid work technology?

The worry is that this type of technology would also be used to monitor employees on the basis that there is a ‘normal’ way of working – or normal behavior traits – which could have a hugely negative impact on the culture of trust within the business and could lead to workplace discrimination.

Announced on Data Privacy Day 2021, the organization said its guidelines can ensure the protection of “people’s personal dignity, human rights and fundamental freedoms, including the security of their personal data.”

The Council urged debate on the use of live facial recognition in public places and schools, covert use by law enforcement, and use by private companies in uncontrolled environments, such as shopping centers for marketing or private security purposes.

In 2019, the developer behind the 67-acre King’s Cross area of central London – which includes National Rail, London Underground, and Eurostar stations, as well as restaurants, shops and cafes, and offices occupied by Google and Central Saint Martins college – was found to be using facial recognition across the site to “ensure public safety”.

The local council said it was unaware that the system was in place, while UK data protection laws state firms must provide clear evidence that there is a need to record and use people’s images.

In June last year, amid US-wide Black Lives Matter Protests and heavy-handed policing, IBM said it would scrap the development of general-purpose facial recognition or analysis software in a letter to Congress, where it urged a “national dialogue” on the use of the technology by police and government agencies like the Immigration and Customs Enforcement (ICE).

At the same time, Amazon issued a one-year ban on the use of its facial recognition tech by law enforcement agencies.