How neural networks could transform your next video call

- NVIDIA research is developing an SDK that will use AI to reduce video call bandwidth

- Replacing streams of data-heavy frames, the result is fast, high-quality video even with poor connections

- However, some have compared the technology to deepfakes and have questioned its potential to be misused

There’s nothing like a frozen picture to shatter any pretense of having a normal conversation — for all the practicality of the video call, video streaming is still at the mercy of our internet connection.

NVIDIA Research may have cracked this problem by using artificial intelligence (AI) to reduce call bandwidth while simultaneously improving quality.

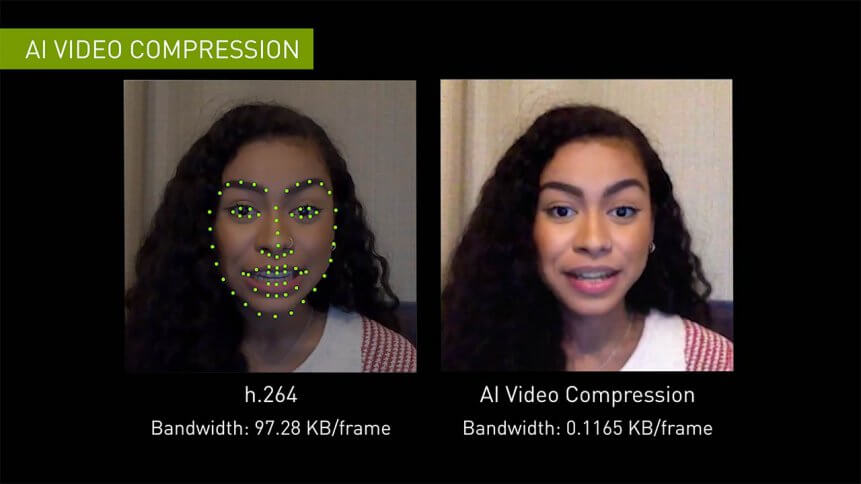

Dubbed NVIDIA Maxine, the solution is a software development kit (SDK) for developers of videoconferencing services. Applications based on Maxime can reduce video bandwidth to one-tenth of the current standard H. 254 video compression technology or ‘codec’, according to the firm.

Not only can this provide a better experience for users, but it could dramatically cut costs for providers.

NVIDIA Maxine — how it works

The technology works by replacing traditional, data-heavy video frames with neural data.

With AI-assisted calls, the recipient sees a reference image of the caller. Instead of a stream of high-res images, the system sends specific reference points around the sender’s eyes, nose, and mouth. A generative adversarial network (GAN) then uses the reference image combined with the key points to reconstruct subsequent images.

Because these keypoints are so much smaller than full images, much less data is sent, so a user could have much slower internet, but still provide a clear, functional video chat.

The results have to be seen to be believed…

NVIDIA says that the SDK can allow developers to add a feature to filter out common background noise. Others such as Autoframe can ensure the camera is on a user’s face for a more personal, engaging conversation — even if they get up to wander around the room — while Gaze Correction uses software to adjust what people see on screen so that the person you’re talking to appear to be looking at you, even if they’re gazing off to the side.

Developers can also add conversational AI functionality, which can enable translations in real-time and ‘virtual assistants’ to take meeting notes and answer questions in ‘human-like’ voices.

Real-time conversational AI services with NVIDIA Jarvis. Source: NVIDIA

Let’s not forget the ability to use avatars — NVIDIA proudly showcased this feature being used with a giant alien head.

Deepfakes in real-time?

NVIDIA Maxine will be available to AI developers, software partners, computer manufacturers, and startups, so it could start appearing, well, everywhere.

But while the technology could help tackle the issue of low bandwidth, which occasionally has individuals turning off their video entirely, many have commented that it will further sever any remaining real-life connection that video conferencing tools are helping us cling on to.

“Basically this is deepfake in real-time,” one YouTube user commented. Others suggested you could potentially “fake yourself” or somebody else into a video meeting, or that it could increase the chance of being phished by a cybercriminal posing as a family member or friend.

Some have also questioned whether the trade-off of lower bandwidth on the sender’s side would mean higher CPU consumption on the recipient’s to recreate the reference image — and, more cynically, whether this demand would open a further avenue for sales of NVIDIA’s graphics processing units (GPUs).