Autonomous vehicles – why safety takes the front seat

- The highly-competitive autonomous vehicle industry has been ‘too eager’ to get vehicles on the roads

- Industry collaboration and open innovation is needed to enhance safety technology and global industry standards

- Five’s Stan Boland and TTTech Auto’s Georg Kopetz join TechHQ to discuss the future of autonomous vehicle safety

Watching Netflix on the ride to work aside, one of the real draws of autonomous vehicles is the elimination of human error from the driving equation.

When fully autonomous vehicles roll on our streets laden with sensors, communications systems and advanced artificial intelligence (AI), we will no longer be at the mercy of distracted, tired, or even drunk drivers, or be at risk of becoming one of the 1 million people killed in road accidents each year – most of which are caused by human negligence.

On the path so far to fully autonomous transport, collisions have rarely been due to technical glitches or systems failures. Instead, they’re down to humans changing lanes without warning or, in the tragic case of Elaine Herzburg, who was hit and killed by an Uber self-driving car on a pilot test, attempting to cross a road in the dark.

To say that autonomous vehicle technology will make our roads safer is an oversimplification, and there is a long way to go before any real autonomous vehicle technology emerges whose developers can confidently call ‘safe’. The death of one individual is evidence enough that the technology isn’t complete, and that the heady race to the front of the market which is magnetizing investment from tech giants, incumbent carmakers, hundreds of new startups and even electronic firms could come at a significant cost if the industry’s “inadequate safety culture” isn’t tackled head on.

Stan Boland is CEO of Five, a self-driving technology creating cloud-based development and safety assurance platforms for autonomy programs. Asked by TechHQ whether there has been too much eagerness to leap forward into real-world trials by the likes of Uber and Tesla (the latter whose automated driving systems have been linked to five fatalities), his reply was straightforward: “no question.”

The safety challenge

The systems we are able to see in controlled tests today, such as Waymo, may most of the time seem flawless. In the wild, Elon Musk’s car brand sold 367,500 semi-autonomous cars last year, and how many made bad press? But achieving AI that can perform as good as, if not better than a human, is incredibly difficult, if not impossible to achieve.

British IT entrepreneur Five CEO Stan Boland studied physics at Cambridge. Source: Five

“We are actually pretty good at driving, as humans,” Boland explained. “In Europe, we will typically have a collision every 150,000 miles. Well, what does that mean for machine performance?”

“Let’s imagine we’re driving in a city at 20 miles an hour, and we take a decision every two seconds – that means that we can afford to make one mistake for every 10 million decisions […] replicating that type of behavior is incredibly difficult when things like deep neural networks typically today make one mistake every hundred decisions.”

Discussing a system that can perform with human-like decision-making, amounts to advocating something complex as the holy grail of artificial general intelligence, Boland said; “We’re really going to have to be very smart about the way we train and develop the way we fuse data together; the way we use search in context. And then we’re going to have to understand risk and propagate that through to production planning, and to create a system that is as safe as a human one.

Work towards autonomous vehicle safety “cannot be done lightly”, he continued, and the industry must first establish a deep understanding of the framework and set of capabilities that can generate and prove safety, if the technology is to be accepted not just by regulators, but by society. “I think a lot of these companies have been too quick to get stuff out there,” Boland said.

“We’ve got to find the balance here between the velocity of development, and safety.”

Striking a balance

It’s the mission of The Autonomous to find that balance of controlled progression. This is a collaborative forum launched by Austria-based automated driving safety software platforms firm TTTech Auto, devoted to shaping the future of safe autonomous mobility through open idea exchange and discussion. Representing the eclectic mix of the autonomous vehicle industry’s entrants, membership comprises car giants like Audi, Volkswagen, Daimler; hardware and chip-making leaders like Intel, Nvidia, Arm; and autonomous technology startups such as Five itself.

The participants are aligned on safety as being the main hurdle to autonomous vehicles’ broad acceptance and, breaking the kilter of this already highly-charged, competitive industry, they don’t believe going alone as single OEMs or tech companies is the wise answer to achieving it. Instead, investments and innovations and research can be shared, development frameworks and legal standards hashed out and agreed upon, and regulations sought.

Open innovation for mutual benefit is not something the traditional car industry is a stranger to, and for many incumbents now moving into autonomous vehicle technology, it’s the most viable approach to doing so. Coming together as an industry can ensure the vast banks of knowledge and innovation are shared to overcome the industry’s largest obstacles, enabling an ecosystem to flourish all the more sooner.

Small steps first

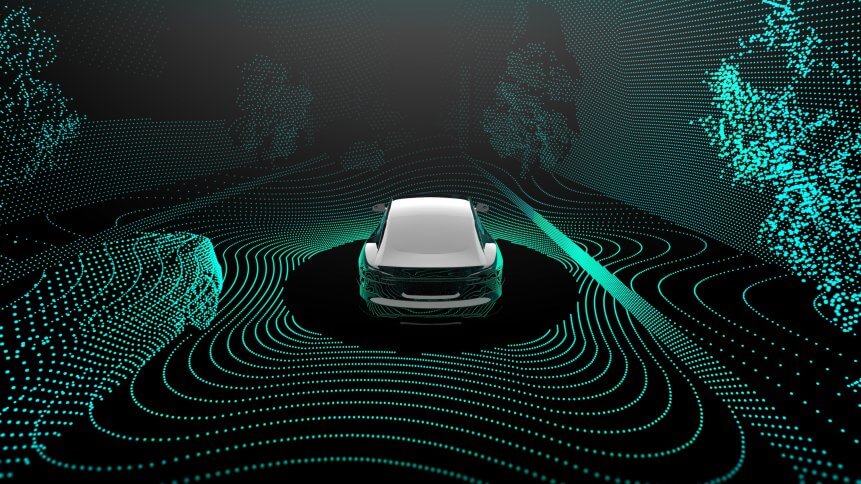

Much of the work in developing and training safe and effective autonomous driving systems will involve test tracks, controlled road trials, and thousands of hours devoted to data-led scenario modelling and the creation of virtual environments, which can simulate weather conditions, road signs, unpredictable traffic and thousands of other variables. But it will also happen if the industry tempers its eagerness and takes small but confident steps forward with the technology already on the road today.

While it’s full-, or level 5, autonomous vehicle technology that captures our imaginations, these systems are evolving on the roads now.

Most new, higher-end cars today have level 2 autonomy; computers can take over functions from the driver and are smart enough to moderate speed and steering using multiple data sources. Firms like Mercedes and Lexus have developed vehicle systems that can take over directional, throttle and brake controls using satnav data. Even more advanced systems – coined as ‘Level 2+’ by Nvidia – can respond to surroundings outside, but also monitor for things like driver tiredness on the inside, while Mercedes is close to releasing an “eyes-off” level 3 autonomous S-Class.

AI programs can be trained in modelled, virtual scenarios. Source: Five

“The good news is that we have this level two plus system where we don’t need to replace the operator,” Georg Kopetz, co-founder and CEO TTTech Auto, told TechHQ. “The more we are deploying those systems now in real use cases, the more data we collect, and the more understanding we collect about different sensor sets about different, let’s say, road conditions.

“We are connecting those systems to the cloud, so we are understanding much more than we used to, so I believe this emerging and evolving trend to level 2+ will give us a lot of insights, and then moving to level three and level four will do as well.”

Developing safe autonomous systems then will be a process that continues to evolve, steadily, in the coming decade and most likely beyond. But there will always be intrinsic risks, and we have only skimmed the surface of a deep ocean of discussion and debate – led by platforms like The Autonomous – as to what ‘safe’ means in a self-driving vehicle where the state space for testing is near infinite.

“There’s always going to be a validation gap between what the real world really is and what our testing environment is, and that gap is never going to be zero,” said Boland.

“Accidents will happen – but we hope that those gaps will be sufficiently small that they’ll be contained within the envelope that today looks like human driving. And so the accident rate here would be the same or less than we in human driving.”