Data center cooling – a $24B business by 2024

Cloud services are drawing the spotlight at the moment. Whether it’s Netflix or Zoom, we are realizing that the digital products and solutions we rely upon day-to-day at work, and in our personal lives, are made possible by this technology.

Of course, ‘cloud’ is an abstract term which makes those programs we use seem devoid of any kind of physical origin. We know that’s not true, of course – cloud is just access to a server you don’t own and can’t see, and the footprint and power consumption of their data center origin is testament to that.

Amazon Web Services effectively powers vast swathes of the internet. Netflix, for one, reportedly spends US$19 million per month on AWS services, using more than 100,000 server instances on AWS for “nearly all its computing and storage needs.” That includes databases, analytics, recommendations and transcoding, ultimately ensuring episodes of Tiger King run seamlessly in 1080p and ‘Playing with Fire’ rolls into ‘Make America Exotic Again’ for millions of concurrent users with zero noticeable lag.

Other internet giants like Facebook, Twitch, Baidu, Twitter, Adobe, and even the BBC, are said to spend between US$8 million to US$15 million each per month, and that’s just a handful of the ‘big spenders’ with one cloud provider.

These companies are all buying access to virtual computer clusters with all the attributes of a physical desktop computer, such as CPUs, GPUs, RAM, storage, application software like databases and web servers – all powered by sprawling server farms across the world. With footprints in the many millions of square feet, data centers are enormous operations, but it’s their power consumption that is their truly goliath characteristic.

Every search, click, streamed video sets several servers in one or more of these server farms spinning. Just one Google search could activate servers in up to eight data centers around the world – that, of course, consumes energy. According to Fortune, the 2018 recording-breaking track ‘Despacito’ which became the first video to hit five billion YouTube views burned as much energy as 40,000 US homes in one year.

Unsurprisingly, as the number of cloud services devices we rely on continues to escalate – as well as advances towards evermore data-heavy applications – data centers are becoming a serious sustainability consideration.

In 2018, China’s data centers produced 99 million metric tons of carbon dioxide, equivalent to 21 million cars on the road, while worldwide, data centers consume 3 to 5 percent of the total global electricity.

Larger data centers can demand almost a fifth of the output of a conventional coal power plant. The equipment of data centers gets so similar to that used in energy production that Google recently decided to build a data center actually within a power plant.

This energy consumption is bad for the environment, yes, and also bad for the tech giants whose customers – while enjoying the luxury of anywhere-anytime access – now place sustainability near the top of the totem in their affinity with the world’s brands.

But that’s not to mention the terrific financial cost of running cloud facilities and, indeed, cooling them.

Dealing with excess heat is one of the biggest, most expensive factors involved in running a modern data center. In the last decade, this has seen data centers built in places with colder climates, like Iceland, in efforts to improve efficiency, with fiber optic cables piping data down to Europe and North America.

Others turn to water-cooling; in Arizona, where water is a scarce enough resource as it is, Google is building a data center that will be ‘guaranteed’ a million gallons of water a day to keep it cool, up to four million if it hits project milestones.

Given the towering costs – financial and environmental – at stake, reducing data center energy consumption has become an established and lucrative market in its own right. Innovations here are necessary, and the market value will continue to soar in the coming years.

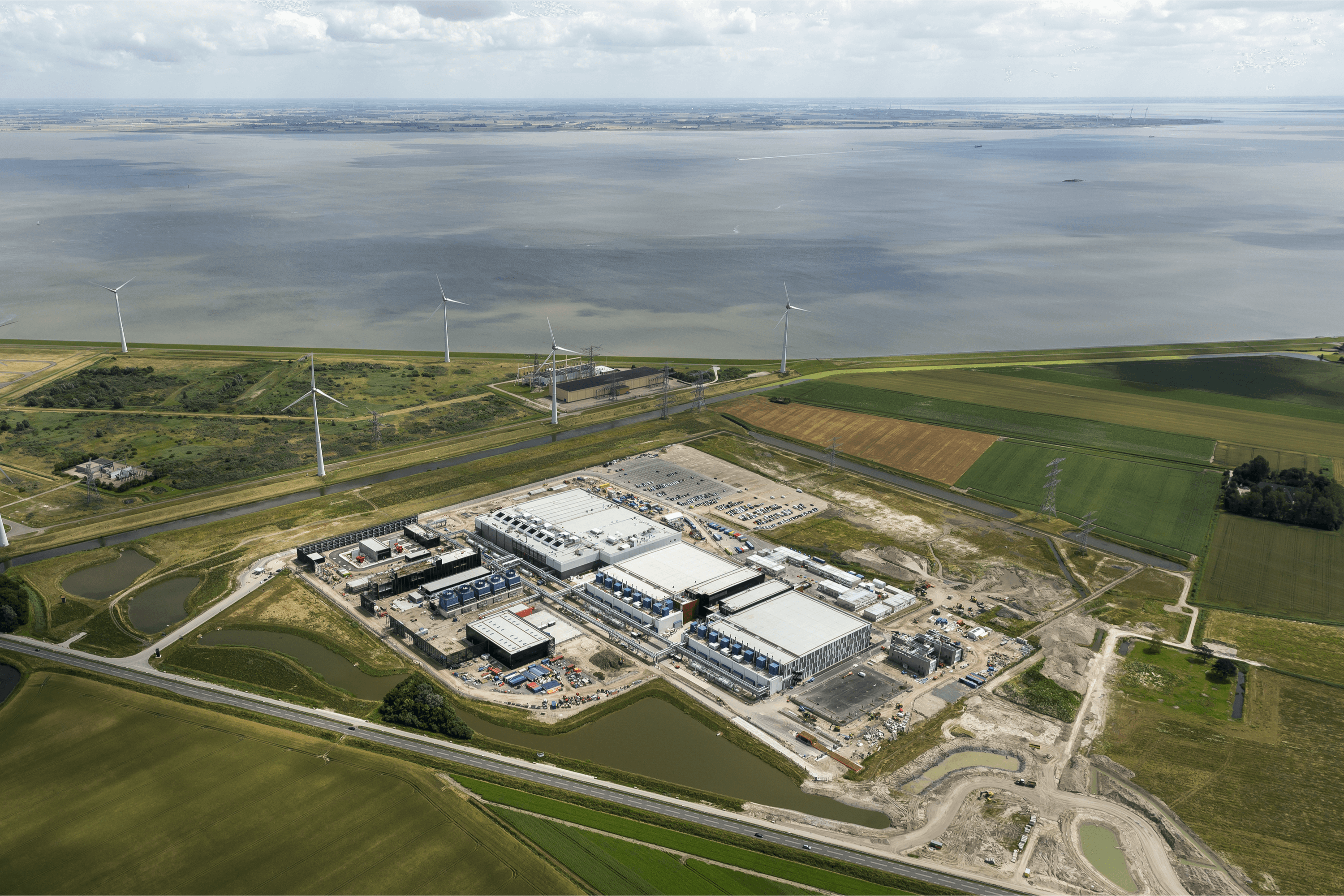

A Google data center in Eemshaven, Netherlands. Source: Shutterstock

With nearly two-thirds of US enterprises set to use hyperscale services next year, a report by Global Market Insights has estimated that the “unprecedented evolution” of cloud computing will lead the data center cooling market to surpass US$20 billion by 2024.

The market for data center racks – an essential feature of data centers – is expected to hit a worth of US$2.8 billion by 2023, accommodating new, high-density servers and ensuring airflow is maximized. Some of these will even have chillers built into racks themselves.

YOU MIGHT LIKE

Why the world of IT is demanding the green data center

But the data center cooling market is an expansive one covering anything from precision air conditioners, chillers, air handling units, water cooling and water immersion. Meanwhile, ‘pumped two-phase’ cooling is emerging as a solution where heat levels are higher than traditional air and water-cooling systems can handle. The systems offered by some providers have a physical footprint half the size of conventional operations.

Free-cooling, meanwhile, is a fairly recent development which, rather than using a compressor to cool the same air, replaces hot air from inside a server with colder air from the external environment. This takes expense out of cooling and ultimately means cheaper data center services – it’s an approach already being employed by some of the world’s biggest internet companies.

Despite these current innovations, the goal will be (and should be) achieving carbon neutral cooling methods. While it may be slurping the water supply of Arizona, in Finland, Google’s Hamina data center uses seawater to power the cooling system it relies on.

Other firms, like Microsoft and Apple, have either built or supported energy efforts that are renewable in order for their data centers to be powered by solar or wind energy. These initiatives are necessarily woven into commitments like Microsoft’s “moonshot” pledge to become carbon neutral by 2030.

New approaches to data center cooling are also on the horizon. Stanford University, for example, developed material that can cool itself in direct sunlight below ambient temperature levels, which could revolutionize the materials used in the components of data center technology.

A science team from Louisiana, meanwhile, described a cooling principle based on material that changes temperature when subjected to different magnetic fields.

Artificial Intelligence (AI) also has a role in augmenting energy efficiency. Tech giants are using systems that can gather data from sensors every five minutes, and use algorithms to predict how different combinations of actions will positively or negatively affect energy use.

AI tools can also spot issues with cooling systems before they happen, avoiding costly shutdowns and outages for cloud customers.

So, data center cooling is not only a lucrative market right now, as cloud services scale and data centers continue to expand and be built, it’s a very important one – if not for the environment, for your Netflix experience as well.