Why organizations need a data lake architecture

Data are valuable assets of every organization and a vast collection of data (big data) requires proper management and secured storage.

Organizations dealing with big data understand the importance of setting up a data repository to ensure smooth workflow and accessibility to important data when needed.

In some cases, accessing data in large organizations can be less straightforward than preferred due to double security measures and sources of data, which stem from internal or external storage.

Hence, the concept of “data lake” is introduced and originally described by James Dixon, the founder and CTO of Pentaho as;

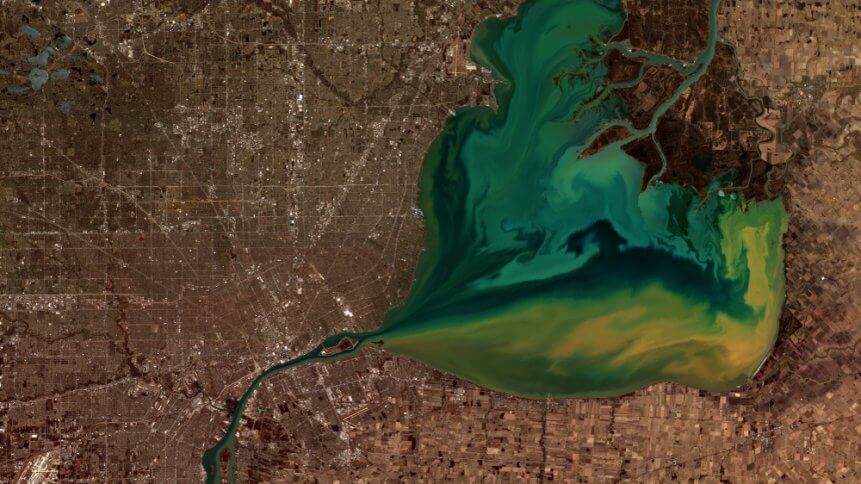

“If you think of a data mart as a store of bottled water – cleansed and packaged and structured for easy consumption – the data lake is a large body of water in a more natural state. The contents of the data lake stream in from a source to fill the lake, and various users of the lake can come to examine, dive in, or take samples.”

The main features of a data lake are the storage of collected data in a single place and open access to all valid users.

Organizations can benefit from this open concept of retrieving data from a single storage space.

All in one storage

Since a data lake holds all data regardless of forms and structure, the flexibility of this arrangement allows any data from internal or external sources to be stored in a single place.

When bits of data are put together from multiple sources, it could yield significant perspectives on consumer behavior for businesses.

For example, a retailer may have records of customers’ purchase history and when combined with the survey form filled by customers, the business can gain insights on the shopping habit of consumers and possibly generate marketing strategies to appeal to consumers of similar product preferences.

Therefore, a collection of data can form a bigger picture and help businesses gain insights into their customers.

Time saving and cost-cutting for organizations

In traditional data analytics pipeline such as a “data warehouse“, data are stored in an organized manner and are “processed” beforehand.

Similar to a real warehouse, data are categorized and packaged before being stored on shelves.

In order to maintain the structure and operations of the warehouse, plans and pathways of data storage are developed to sort and prepare data for storage.

Due to the nature of a data lake, this step is unnecessary and organizations can experience reduced developmental and maintenance expenses.

In the same vein, data engineers save time when pre-data storage plans are no longer needed, allowing them to work on other developmental plans such as effective ways to harvest data and analyzing big data.

Agility in a fast pace environment

In addition to a data lake being able to accommodate new data without additional cost or implementation time due to its flexible design, organizations may see the growth of data rise.

The ability to store new data without changing an entire data structure allows companies to stay ahead in digital adoption without being held back by infrastructure changes in data storage.

Moreover, the unprocessed data kept in its original form can easily take shape when required or retrieved by users, allowing companies to move forward with projects and accomplish organizational goals.

With 92 percent of large organizations ready to adopt big data and artificial intelligence (AI) initiatives, a comprehensive and simplified data pipeline is essential.

Even though the prospects of data lakes look promising, there is a risk of data lakes turning into data swamps over time, whereby an influx of data stored in data lakes remain unorganized and unused.

However, by organizing the value of data through visual representation and aligning them with organizational goals, organizations will see their data repository as a treasure vault.