Computer scientists have made a robot that rebels against its user

As automation technologies and robotics permeate our businesses, some jobs may be lost, yes, but others will be augmented.

In the workplaces of tomorrow, whether on factory floors or within towering office blocks, workers will rub shoulders with machines that can make their own decisions.

It’s unlikely this shift will be jarring; it will likely happen over time— but, at the same time, the world of business must prepare for these new working partnerships. Understanding our relationships between unsentient machines is key to a smooth transition.

That is the goal of a new project by researchers at the Bristol University, but fostering strong relationships, they believe, is not as straightforward as developing robots that just ‘get’ their human overseers.

Computer scientists Janis Stolzenwald, a Ph.D. candidate, and Professor Walterio Mayol-Cuevas, know that cooperation is an “essential aspect of automation”, but they don’t believe cooperation at the development stage is sufficient to break in a dynamic.

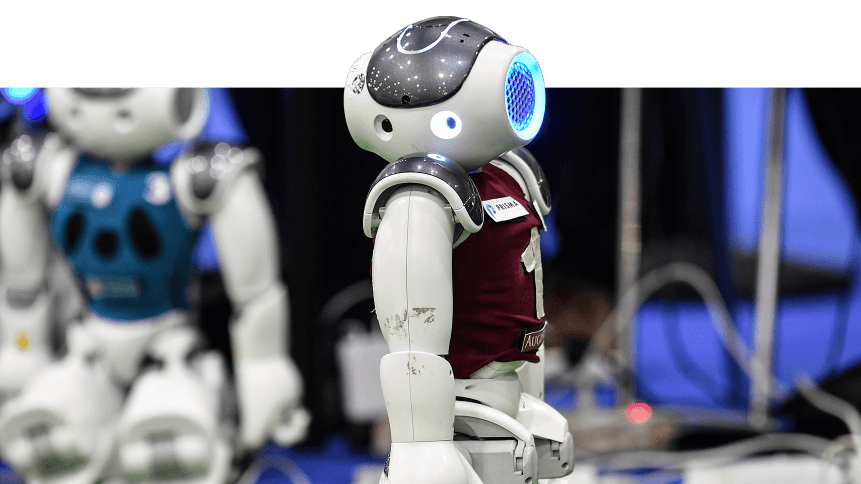

The team has developed intelligent, handheld robots (see video below) that can complete tasks in collaboration with the user. The robot holds knowledge about the task and can help through guidance, fine-tuned motions, and decisions about task sequences.

In order to achieve the task as efficiently as possible, however, they can “rebel” against user intentions. The result? Irritation among users when decisions aren’t in line with their own plans.

This research is a new and interesting twist on human-robot research, as it aims to first predict what users want and then go against these plans.

“If you are frustrated with a machine that is meant to help you, this is easier to identify and measure than the often elusive signals of human-robot cooperation,” said Professor Mayol-Cuevas.

“If the user is frustrated when we instruct the robot to rebel against their plans, we know the robot understood what they wanted to do.”

For the study, researchers used a prototype that can track the user’s eye gaze and derive short-term predictions about intended actions through machine learning. This knowledge is then used as a basis for the robot’s decisions such as where to move next.

The Bristol team trained the robot in the study using a set of over 900 training examples from a pick and place task carried out by participants. The handheld robot itself was designed by former Ph.D. student Austin Gregg-Smith, who has made the design open-source.

As autonomous robots become more commonplace in industries such as construction, logistics, and warehousing— and baked into the software, programs and tools we use day-to-day— the research sheds important light on the complex dynamics of human-machine cooperation.

Daily operations could see new efficiencies, as robots are developed that can undertake tasks efficiently without oversight but can ‘step back’, allowing a human to take control when they detect user intent that conflicts with its actions.

The global robotics market is tipped for double-digit growth in the coming years, accruing a revenue worth of $US147 billion in the next six years— up from US$35 billion in 2016.

The surge in spending comes as many industries struggle with rising labor costs and a lack of skilled workers.

In automotive manufacturing for instance— where robots have been used for decades— Machine Vision (MV) is used for safety inspections, utilizing technologies such as infrared, 3D imaging and X-ray to negate a time-intensive task for human safety inspectors.

This type of machine, known as static robotics— the metal, arm-like machines that probably spring to mind when you think of robotics in production lines— currently account for the highest amount of spend, owed to their prolific deployment in aerospace, manufacturing, automotive, and even healthcare, where skilled workforces can be hard to come by.